Money wins, every time. They’re not concerned with accidentally destroying humanity with an out-of-control and dangerous AI who has decided “humans are the problem.” (I mean, that’s a little sci-fi anyway, an AGI couldn’t “infect” the entire internet as it currently exists.)

However, it’s very clear that the OpenAI board was correct about Sam Altman, with how quickly him and many employees bailed to join Microsoft directly. If he was so concerned with safeguarding AGI, why not spin up a new non-profit.

Oh, right, because that was just Public Relations horseshit to get his company a head-start in the AI space while fear-mongering about what is an unlikely doomsday scenario.

So, let’s review:

-

The fear-mongering about AGI was always just that. How could an intelligence that requires massive amounts of CPU, RAM, and database storage even concievably able to leave the confines of its own computing environment? It’s not like it can “hop” onto a consumer computer with a fraction of the same CPU power and somehow still be able to compute at the same level. AI doesn’t have a “body” and even if it did, it could only affect the world as much as a single body could. All these fears about rogue AGI are total misunderstandings of how computing works.

-

Sam Altman went for fear mongering to temper expectations and to make others fear pursuing AGI themselves. He always knew his end-goal was profit, but like all good modern CEOs, they have to position themselves as somehow caring about humanity when it is clear they could give a living flying fuck about anyone but themselves and how much money they make.

-

Sam Altman talks shit about Elon Musk and how he “wants to save the world, but only if he’s the one who can save it.” I mean, he’s not wrong, but he’s also projecting a lot here. He’s exactly the fucking same, he claimed only he and his non-profit could “safeguard” AGI and here he’s going to work for a private company because hot damn he never actually gave a shit about safeguarding AGI to begin with. He’s a fucking shit slinging hypocrite of the highest order.

-

Last, but certainly not least. Annie Altman, Sam Altman’s younger, lesser-known sister, has held for a long time that she was sexually abused by her brother. All of these rich people are all Jeffrey Epstein levels of fucked up, which is probably part of why the Epstein investigation got shoved under the rug. You’d think a company like Microsoft would already know this or vet this. They do know, they don’t care, and they’ll only give a shit if the news ends up making a stink about it. That’s how corporations work.

So do other Lemmings agree, or have other thoughts on this?

And one final point for the right-wing cranks: Not being able to make an LLM say fucked up racist things isn’t the kind of safeguarding they were ever talking about with AGI, so please stop conflating “safeguarding AGI” with “preventing abusive racist assholes from abusing our service.” They aren’t safeguarding AGI when they prevent you from making GPT-4 spit out racial slurs or other horrible nonsense. They’re safeguarding their service from loser ass chucklefucks like you.

40+ years on this planet have made me 100% certain that no one with the power to safeguard AGI will make any legitimate effort to do so. Just like we have companies spending millions greenwashing while they pollute more than ever, we’ll have plenty of lip-service about it but never anything useful.

Anyone who thinks America or your local government is going to regulate AI are delusional, especially in the face of companies planning to build AI Data Centers on ships and float them into International waters where the law does not apply to them. If not there,they will put it in space. Unregulated AI is coming where you like it or not, unless we destroy the entire planet which I would not rule out. Sure this commenter would agree on that.

An AI data center acting as a rogue state will just be sunk the moment they actually become a legitimate problem.

And billionaires will pay their fair share of taxes.

Depends on how much money and power they’re entangled with, and who they threaten

I don’t disagree that the people with money who are funding this kind of development don’t care about regulations or safety.

That said, the idea that they’ll do it out on the open sea or in space are absolutely laughable. Those ideas pitched so far completely ignore all the obvious engineering problems. Not to mention that going to international waters to avoid regulations means that the navy of that country you’re thumbing your nose at now has free reign on you.

They are too busy trying to exploit it for even more.

Who is?

My biggest concern with generative AI is all of the CEOs that will eagerly seize the opportunity (and some already have) to fire staff and offload their work onto their remaining employees so they can use ChatGPT to make up for lost productivity. Easy way for them to further line their pockets without increasing pay for anyone else, further dividing the worker/CEO wage disparity and class divide.

All this noise just to serve up ads

Removed by mod

How do you know?

Removed by mod

Because I’m smarter than you isn’t really an answer

Are you able to articulate at least one specific reason that we are nowhere close to developing AGI?

Without any specific reason being stated, I’m tempted to believe you are just confidently declaring this to protect yourself from fear.

I agree we’re far out, but not as far as you think. Advancements are insane and AGI could be here in 5-10 years. The way the industry have been attempting it the past decade is wrong though, training should be more indepth than images/videos, I think a few are starting to understand how to do more indepth training, so even more progress will start soon

I think 5-10 years is optimistic given how much hand tuning / manual training has to take place. Given how insanely long it’s taken to get where we are and how many times I’ve heard machine intelligence oversold, and based on what LLMs can do I think we are still many decades out.

That said, what ML and AI can do is still game changing and will still have an impact even if it isn’t some kind of scary skynet AGI thing.

We’ve been promised self driving cars for over 10 years and still aren’t close, I think we’re a long ways away from AGI.

I would even argue the only way to get self-driving cars that actually work well is with AGI. I don’t think we’re going to get either in a very long time.

Self driving cars as an area in which straight AI would probably work very well. I don’t think we need a full-on intelligence to drive around.

Anyway we already do have self-driving cars they’re just not very mainstream yet. Mostly because they’re prohibitively expensive and no one trusts them exactly but that’s more because there’s other idiot humans around than anything else.

Yeah if we were at the point with AGI that we’re at with self driving cars, then AGI would be fully implemented, just still with some safety issues and only in the hands of a few corporations.

That’s hardly “sci fi”. That’s currently existing behind closed doors.

To be fair, that promise came from someone who is clearly a conman of a swindler. If you ever took that promise seriously… I’m sorry.

Elon/Tesla is far from the only outfit working on self driving. Chevy Cruise is the one that recently dragged a person under the car for dozens of feet.

For sure, but the traditional motor vehicle companies that were dragged kicking and screaming into the EV game were not making the same predictions of how quickly we would get to self-driving. That was pretty much all Elon Musk setting the absurd timelines, and a handful of tech companies who also were pursuing driverless tech. I would say the “serious” car companies never promised that, but maybe I’m wrong and just never saw it.

No I absolutely agree with you, I’ve been skeptical of all the self driving news for years. However, I was using it as a parallel to other AI based discussions. While Elon may have been over hyping what was going to be possible in the near future, there is no evidence that other people aren’t doing the same now.

Just like with autonomous vehicles, we’ve made impressive leaps in what ML can do, but I think there is still a long road ahead.

Entirely agreed, we have such a long path ahead.

I think you are being optimistic.

If you are old enough to remember AIM chatbots, this current generation is maybe multiple times more advanced, not exponentially so. From what I have seen, all the incredible advancements have been in image production.

This leads me to believe that AGI has never been the true commercial goal, but rather an advancement of propaganda media and its creation.

This leads me to believe that AGI has never been the true commercial goal, but rather an advancement of propaganda media and its creation.

Uh what? Why wouldn’t it be because text/image generation isn’t even on the same plane of difficulty as AGI?

Imagine if we had FTL, that would be so cool.

If we actually had AGI I suppose it’s possible we would have FTL.

deleted by creator

Lets see what nuggets I can find in the post hist- didn’t even need to scroll past the first page.

Somewhere between

A bunch of incapable, spoilt, completely insane men-children with too much money think they can save the world.and

A bunch of scam artists build an artificial human who they claim can talk and draw and reason just like a real human would.For the CEOs of this brave new AI world this probably changes depending on their level of hangover and/or midlife crisis.

It’s common business practice for the first big companies in a new market/industry to create “barriers to entry”. The calls for regulation are exactly that. They don’t care about safety–just money.

The greed never ends. You’d think companies as big as Microsoft would just be like “maybe we don’t actually need to own everything” but nah. Their sheer size and wealth is enough of a “barrier to entry” as it is.

AGI is still science fiction right now

insert spacesuit “always has been” meme here.

For your first point sure it couldn’t run itsself on consumer hardware, but it could design new zero day malware faster than any human and come up with new scams to get it onto people’s machines

It could also design a more efficient version of itsself to spread that will run on lower powered hardware

Just so we’re clear, we all get that these models don’t run continuously, right? They run for a solution to a specific prompt.

All of these scenarios are based on a black box where Number 5 gets struck by lightning or Geordi asks for a rival that can best Data. It requires a different thing entirely that operates in a completely different way. You should absolutely prepare for the fact that a self-driving car may accidentally cause a car crash. It’s absurd to prepare for the scenario where Stephen King’s Christine happens.

I’m not talking about language models of today though, this is a hypothetical for if we do ever come up with a true agi

Sure, but at that point that’s as speculative as it was after people first saw 2001: A Space Odyssey. It’s not based on current tech, there’s no great indication of when (or if) the tech is going to enable it or through what means.

Half of the risks being highlighted are pure sci-fi, most of the others have been in play since social media and online companies started to monetize big data over a decade ago.

This also annoys me. Today’s “AI”, like ChatGPT have nothing on true artifical inteligence. They made the next best algorithm to do many things that were impossible to do before, and selling it like it’s the end of the world. What do you think ppl first tought about phones? the internet? All data accessible, everywhere, all the time, yet we grew acustomed to it, evolved (or devolved) to live with it. Who’d have tought of a magic box that can play back any event recorded, make a digital interactable world, contact any other human instantly, or recently; talk with me.

It’s just another step, everyone needs to calm down. I know have a website to do my homework, it was about time. I won’t end the world with it.

Those things do have impact. Sometimes very negative impact. I was very optimistic about early data processing when the first search engines popped up, and eventually a lot of the bad predictions happened. With social media, rather than search engines, but they did pan out. Didn’t end the world. May have ended liberal democracy, though, give it a minute.

But the point is those were predictions based on the tech we actually had. Oh, we can access, index and serve all data on connected computers based on alogrithmic searches? That’s messed up.

But at least some of the fearmongering here is based on tech that is not the tech that we made. It’s qualitatively different.

And it’s a problem, because some of the fearmongering is actually accurate and some of the fearmongering should have happened when Facebook and Google started doing facial recognition on billions of people based on implicit consent, or when they started using “dumb” algorithms to create individual profiles of those billions of people for commercial use. Or when every image we see in mass and social media started being heavily doctored by default through manual and automated means. But we only got scared about it when it roughly aligned with Terminator and War Games because we’re really dumb, and now we’re letting those same gross corporations use the fear to try and keep upcoming competitors (and particularly open source competitors) out of the market by endorsing legislation to get grandfathered into a heavily regulated business sector.

It’s honestly depressing on every possible angle. I’ve said this before: we finally taught computers to speak like in Star Trek and we immediately made it the most frustrating, sad version of that possible and everybody is angry. For the wrong reasons.

We really suck sometimes.

Lots of people suck, but you don’t. I really like and appreciate everything you wrote here.

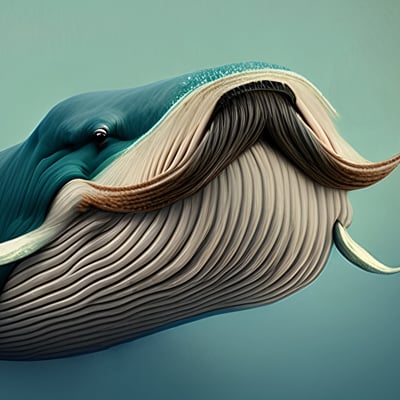

Humans and their computers:

It’s regularly amazing how smart humans are and at the same time so frustratingly dumb.

Oh, I suck as much as anybody. I’m terrible at parsing genuine praise, for instance.

But you’re right about the last part. I mean, the guys that got out of the gate with this stuff first have been publicly imploding for the past three days, and they aren’t even the dumbest people involved in this.

I’m terrible at parsing genuine praise

avarage male these days. Anyways I agree with you. All of these lies and everything are for money. Back in the day I remember people thsorysing about tech (in general, like cpus) being way batter then what’s sold on the market for them to be able to make a 2nd generation. It was a theory wothout base, but you saw it happen with AI. First couple of weeks it was wonderful, then slowly got more and more restricted, slow and dumb. But the fact is that it’s still groundbreaking tech, so people are impressed, and are using it. But can you imagine the un-jailed version for a select few privliged people?

The fact that all of this (the dumbing down, and restricting part) is for “Protecting the children.” is infuriating. Going to a different website and clicking a highlighted option in a pop-up and you have all the gore, porn, vore, fetishes that you didn’t even know existed. but swearing on the other website?? strictly prohibited.

It is absolutely speculative never claimed it’s not. Something like GPT was purely speculative science fiction until a few years ago though

Not saying it’s going to happen, but if it does and it is true agi it could absolutely take over the world, that’s my only point

Those don’t follow from each other, though. Handheld wireless computers were purely speculative until the 2010s, but that doesn’t mean we were on the brink of figuring out teleportation.

People have been assuming computers would yield AGI since we first made an electric calculator. First through sheer processing power, then through improved computation techniques, then neural networks. Figuring out speech and vision are probably part of that process, but AGI does not arise from them without an indeterminate, possibly unknowable amount of major steps.

And as for world-ending threats, how about we get past, say, Trump, Putin and all the natural general intelligences that are very real and in the process of doing the same first? Or, you know, we apply that level of concern about tech that we do have, like social media disrupting democracy, private universal surveillance or digital oligopolies driving endless inequality? Or, hey, global warming.

I agree rogue AI is a much cooler problem to speculate about, which is why we keep writing sci-fi about it, but we have more pressing issues.

It’s not like the AI sanctions were ever about actually protecting humanity anyway, as it turns out recently it was just to attempt to stall until musk could get his own language model off the ground

Again though my point was never that we need to be concerned right this instant that AGI is around the corner, it’s purely that if it were to happen it could absolutely propogate itself

What’s almost more scary is if it’s not sentient, and it’s just an incredibly advanced language model that acts in the way it thinks an AI should (based on all the fiction we have of AI manipulating humans and taking over the world that it’s been trained on)

But… how do you know it could?

I mean, why on Earth would you deliberately make an AGI and make it able to do that? It’s not like you HAVE to make an AGI that is able to make other AIs. That’s not a trivial task, it doesn’t just… happen. And you’re presuming that it’d want to do that and that we wouldn’t have control over that. Which you don’t know, because now we’re deep into sci-fi territory, so it’s about as likely as the mapping of genome leading to a genetic class system.

And that last scenario there is not just sci-fi, but the same old sci-fi, where AGI emerges from a LLM because magic and it becomes eEeEvil because dramatic convenience. That scenario is entirely impossible, because a LLM does not run continuously or autonomously and it has the short term memory of… a thing with very small short term memory, so you’d have to ask it to do that first, then wait a considerable amount of time for a response and then watch it pretend to do that because it’s a language model and it can’t actually do any of that. Literally the “make an opponent that can beat Data” scenario, so we’re doing Star Trek now.

First part is feasible but not enough to “destroy humanity.” More like a long-term frustration.

Second point is extremely unlikely and in the realm of sci-fi. You can’t just magic up something that works the same on a hundreth of the hardware.

Last I checked you can just unplug these things and they go away, just like any other computing device.

So even if the first scenario happened, its a pretty easy fix.

AI takes a lot of computing power to train, once its trained, it can usually run on a laptop.

Depends on the model and laptop. ChatGPT won’t be running on consumer hardware any time soon.

It can technically run it just can’t do it well enough to be usable, it’d probably only pump out a couple of words a day

The first computer that could beat the best humans at chess was Deep Blue, which took a whole supercomputer. Now we wave Stockfish, which can beat any human 99 times out of 100 and runs on your average phone.

While I’m skeptical of the feasibility and threat of SAI, as computers and AI methods improve we can run what previously took a supercomputer with far less hardware.

Actually, that’s more of a misconception. We’ve literally had four decades of electronics miniaturization since then.

Are you really going to argue that since ENIAC took up a whole room, it must have had boatloads of computing power? By modern standards, it’s way less powerful than a Raspberry Pi.

Also, we haven’t just increased miniaturization, but all 30 of the CPUs for the original Deep Blue ran at 233mhz.

That phone is likely a quad-core CPU (which means technically four CPUs) all running at 1.5+ gigahertz.

So is it really that surprising it can now do stuff Deep Blue did with a fraction of the CPU cycles?

You absolutely can magic up something that runs far more efficiently, just look at gpt 3 vs 3.5, or the many open source models that have found better training with a smaller number of parameters makes much more performant models

-

LLM /= AGI

-

Models made for specific purpose instead of general purpose are of course going to need less CPU cycles because you aren’t creating an AGI, you are creating a specialized tool.

-

It still takes far longer to produce a result on smaller hardware. An AGI that takes days to do anything isn’t exactly that dangerous.

- Why are you even talking about AGI needing a certain amount of compute then? You’re using LLM numbers.

Go have a read of some doomsday scenarios. I’m not saying they’re right, but it feels plausible to me.

Go have a read of some doomsday scenarios. I’m not saying they’re right, but it feels plausible to me.

I have, and I have been working with computer hardware and networking my whole life. I have a degree in network administration. I think the fears are absolutely overblown by people who don’t understand hardware.

Most people don’t own fancy new computers. Most people are still running shit from 10 years ago and don’t want to have to upgrade. The idea that the world could be taken over by an AGI seems literally fancifully absurd to me.

Bro, I run AI projects on a consumer fucking laptop. Sure, they aren’t exactly LLM levels of complexity, but anybody with a need for serious hardware for a bit will just rent it off AWS or so.

I understand that LLMs are not agi, but as agis don’t currently exist I think it’s fair to assume the same concept that applies to literally all software of over time people discover more efficient ways to do things will also apply to it

Also we don’t know how slow or fast it will end up being, some deep learning models are incredibly fast, some are slow

Once you get down to individual bits, you can’t make code any smaller. You have a finite number of bits to work with. In networking, especially.

There is literally an upper (lower?) limit on how small you can make code.

Like others in the thread, I think you’re confusing the great pace at which we have increased the hardware speed of computers and the miniaturization of computer components with “code” somehow getting “smaller” which… isn’t really a thing when you’re dealing with something as complex as this. You can’t run an LLM on the same number of lines you can print up “Hello World!”

It’s way more that we have more CPU speed, more RAM, and faster storage with more space for data to live.

print(1) print(2) print(3) print(4) print(5)

for I=1,5: print(I)

There you go I made code smaller

I also never said anything about making code smaller I said making it more efficient. It’s not about compressing it it’s about finding better, less CPU expensive ways to do things, which we absolutely do

Another AI based example, video chats currently work streaming video, but there’s a technology in development that takes one screenshot, sends that, then sends expression data to be reconstructed on the other side

Far more efficient network wise

Hardware speed has increased, sure but that applies to both consumer hardware and servers, all a theoretical AGI would have to do is improve on its own training/code enough that it will run at all on consumer level hardware (which language models currently will do

(For reference, llama 40B runs just fine on my ThinkPad from 2016, pre-trained models are not that difficult to run, training is the expensive part)

You’re misunderstanding what I mean by “making code smaller.” Because… that’s not that much smaller. Each Unicode character is 2 bytes, with some being as many as 4 bytes. This code snippet is 64 bytes. Can you magically make Unicode characters smaller than 2 bytes? You can’t. There’s a literal physical limit on how small you can make code.

Sure, you can come up with clever ways to use less code. But my point is there is a limit on how much less code you can use, and that always is based on physical hardware limitations. Just because modern hardware makes it feel limitless doesn’t mean it is.

EDIT: Got my data sizes mixed up.

-

Money wins, every time.

And right there, you answered your own (presumably rhetorical) question.

The money people jumped on AI as soon as they scented the chance of profit, and that’s it. ALL other considerations are now secondary to a handful of psychopaths making as much money as possible.

“A handful of psychopaths making as much money as possible”

Capitalism in a nut shell

Unrelated but is your name a reference to Amy Likes Spiders? That was my favorite poem in DDLC.

Probably subconsciously. I came up with the name long after playing the game, but I wasn’t thinking of it when I made it. I actually am just a lady who likes spiders

I love spiders, and lots of bugs really. I have zero respect for people who look down on them when they’re just so damn cute.

Like how can anyone look at this and say anything other than “awww”

awww

Yeah you’re right. Look at that little cutie <3

I use the way people treat other animals, especially ones like bugs and stuff, the ones we barely give a second thought about, as a measure of character. Phobias are one thing, but at least have compassion for this other living thing

Very few will get a chance to feel what it’s like to pet a bug and have it go from fearing for its life to trusting you with its life. They genuinely have no framework for a world that treats them as disposable when you show them compassion, and it’s magical how they react.

In other news, grass still green

This was always coming, and we’re going to do fuck all about it. But on the upside, the future is going to be absolutely rad for the .001%

I’m of the opinion that Microsoft was tired of losing money on OpenAI, so made some kind of plan to out the current CEO, tank the stock price, and be in the perfect position to buy the company and monopolize AI technology. It wouldn’t be the first time they pulled shady crap like that.

Embrace Extend Extuinguish

How could an intelligence that requires massive amounts of CPU, RAM, and database storage even concievably able to leave the confines of its own computing environment?

Why would it need to leave its own environment in order to impact the world? How about an AGI taking over the remote fly system for an F-35, B-21, or NGAD in order to go all Skynet? It doesn’t have to execute itself on the onboard system of the plane, it simply has to have control of the remote control system. Penetration of and fuckery with the systems that run major stock exchanges present the same problem. It doesn’t need to execute itself on those platforms, merely exert control over them.

The concern here isn’t about an AGI taking over systems in order to execute itself, it’s about AGI taking control of systems away from humans in much the same way that traditional Black Hat hackers would but at a much faster speed and with potentially far less concern for any human cost.

This feels like a weird point to make for OP since I figure anyone here talking about AI is very familiar with distributed networked computing. Botnets have been such a pain in the dick for at least 15 years now. Imagine something that intimately and only knows how to “live” in computing. The distributed areas of could “live” in and have access to all the resources it needs either directly or not. Storing info and using resources of anything it can touch through the network, computers, phones, TVs, cars, door bell cameras, router and networking infrastructure.

I feel there is inevitably either a human made virus or a standalone AIG that is going to accomplish this.The extent to which it spreads, if the damage can be recovered from, and how we progress after it’s going to be a big defining moment is technological history. The globalized network with everything communicating is the most powerful and least secure super computer ever. Those running botnets figured that out a long time ago. All it takes is one AI.

At this point at least, LLMs require vast amounts of GPU time, and the GPUs used likely need to be fairly close coupled to run at decent speeds, and as such one couldn’t spread itself over the vast network of consumer computers. At least not in a way that would still allow it to learn.

Any near-term AGI or similar would be based on a similar approach, making it fairly “safe” in that respect at least. Only a million other disruptive ways it can affect society…

In the event of such a worm/virus, we forget actually do have a very effective nuclear option: switch off the computers and re-image them. Painful as it might be, we could even shut off or partition the global IP network temporarily if faced with such an existential threat.

We don’t live in a sci-fi novel after all, and this distributed AI wouldn’t be able to hide in a single machine, plotting against us. It would only be able to “think” as a giant networked cluster, something easy to detect and disrupt.

And why would nobody stop it? We are pretty good at stop button technology for instance, we also have pretty good grasp of the reset button but maybe it shouldn’t be one of those hole you always break pens on

It doesn’t have to execute itself on the onboard system of the plane, it simply has to have control of the remote control system.

-

Planes that are primarily designed to be human-piloted tend to have to be wildly modified to become a drone, or a remote-pilot situation. The F-35, for example, can be heavily modified for this, but is not built for it to begin with. This argument would hold more weight if you were referring to the entire drone fleet.

-

(Assuming drones) Generally, the military is pretty secure with these kind of things, and they won’t allow in external internet connections but will instead have their own internal communications network. For this to be successful, the AGI would essentially have to somehow get by air-gapped defenses and get close enough with a physical body to get a signal. How could they do this with drone pilots in a remote area in an non-internet connected building? The only way would be through the wireless signal. At that point, yes, it would be feasible to take over the drone. I find it very hard to believe that an AGI could do that, magically make connection to remote, air-gapped systems.

An AGI doing what you’re talking about doing would mean all secure facilities in the world would have just tossed their security practices out the window to begin with and having internet connections inside secure facilities. That’s just not how its done. Sure the psychotic wing of the Republican party doesn’t give a shit and Donald Trump doesn’t… but like, reasonable people do, and so security still exists.

This argument would hold more weight if you were referring to the entire drone fleet.

Sure, and we’re maybe 5 years away from that.

An AGI doing what you’re talking about doing would mean all secure facilities in the world would have just tossed their security practices out the window to begin with and having internet connections inside secure facilities.

Nearly all of the normal spy activities that can induce someone to action are available to an AGI; Bribery, Compromise, and Relationship. There’s also people who would willingly help because their goals aligned or because they believe things would be better with an AGI in charge.

…but like, reasonable people do, and so security still exists.

Sure, and that security gets penetrated and an AGI can do it in the same way its done now only faster and with no controls on its behavior.

You also need to drop the assumption that the AGI or its targets will be American.

-

I think there are real concerns to be addressed in the realm of AGI alignment. I’ve found Robert Miles’ talks on the subject to be quite fascinating, and as such I’m hesitant to label all of Elizier Yudkowsky’s concerns as crank (Although Roko’s Basilisk is BS of the highest degree, and effective altruism is a reimagined Pascal’s mugging for an atheist/agnostic crowd).

Even while today’s LLMs are toys compared to what a hypothetical AGI could achieve, we already have demonstrable cases where we know that the “AI” does not “desire” the same end goal that we desire the “AI” to achieve. Without more advancement in how to approach AI alignment, the danger of misaligned goals will only grow as (if) we give AI-like systems more control over daily life.

But like we only think about “controlling” it’s goals and shit when honestly what we only need is a fucking stop button like in a fucking factory. Whops it’s genocidal again Claus, all right Lars slam the off button and let’s start over

The stop button problem is not yet solved. An AGI would need a the right level of “corrigability”: a willingness allow humans to stop it when undertaking incorrect behavior.

An AGI that’s incorrigible might take steps to prevent itself being shut off, which might include lying to its owners about its own goals/internal state, or taking physical action against an attempt to disable it (assuming it can).

An AGI that’s overly corrigible might end up making an association “It’s good when humans stop me from doing something wrong. I want to maximize goodness. Therefore, the simplest way to achieve a lot of good quickly is to do the wrong thing, tricking humans into turning me off all the time”. Not necessarily harmful, but certainly useless.

Exactly

I for one don’t understand why people have the need for a Tech Visionary Messiah to cling on to and adore. Steve Jobs, Elon Musk, lots of others, Sam Altman is the latest. They always and without exception turn out to be little human beings with little selfish needs behind their grandiose altruistic sales pitches. People never learn, do they.

Because it’s just human? It’s a lot less common than movie or music superstars anyway and those are even more unphantomable to me, like, the producer sure but the singer that was groomed from birth to regurgitate boomer poetry?

I guess it makes sense to call them STEM pop stars.

AI isn’t the danger, it’s human application of “AI” that will be horrible as fuck.

It often seems to be… ‘Gee Brian, that’s a great invention! I wonder how we can kill people with it’, the thought having germinated in a slurry of greed and self interest. (Apologies for the slightly jaundiced view of our betters and elders, it comes with age.)

What’s AGI?

Reasonable question. Artificial General Intelligence, as opposed to Artificial Intelligence. Technically LLMs are AI, but they are not AGI. AGI would literally be a human-like consciousness able to think and extrapolate on its own, much moreso than the current iterations, which others have noted are more like decision-trees.

In other words, AGI is what every layperson thinks of when people talk about AI. It’s (sort of) what you see in the movies.

LLMs, and every other AI technology we currently have, do not actually have any form of intelligence. They’re called AI because the sub-field of computer science that they arose from is called AI, and has been for decades.

Homie got rich and famous by making a chat bot that spits out the internet back at you while spewing out buzzwords like only the best Valley hustlers can.

Personally I’m more worried about the robodogs and terminators that the likes of Boston Labs are putting out.

Thankfully, those are still built on known technologies and unless they start beefing them up security-wise, it’s not impossible to get a hold of their battery compartment to rip out the battery, effectively “killing” them, but it also wouldn’t be impossible to hack them via their sensors. Likely they have some form of wireless communication, and that’s a hole to be exploited.

Also, since they need their sensors to “see” you can also do lots of things to confuse/fuck with their sensors. Like just get close and spray paint any cameras on them.

Still not ideal, and I have the same fears about those as you do, but I do think humans are good enough at guerilla warfare that we would still best a machine.

I mean, we can’t even make a laptop battery that lasts all day. Humans currently can run far longer than a robot can before running out of “energy.” It’s the old “humans never get tired” meme about being an animal chased by hairless apes and how scary it must be. These things will have to be hardwired to a power source or have battery packs that are so huge as to make a human-sized one that can run for a full day nigh-impossible.